"We review 20 marketing metrics every week, but somehow nothing ever gets decided." This is one of the most common patterns I hear from EC operators. Collecting more metrics feels safer, but it actually slows decision-making down. The more KPIs you track, the harder it is to agree on which one to move first — and ownership quietly evaporates.

This article walks through a revenue-first approach to KPI design: 5 KPIs (Revenue / CVR / AOV / RPS / ROAS), how they relate to one another, how to prioritize them by business phase, and a 3-month review cycle that keeps the framework healthy.

Key takeaways#

- Five KPIs are enough: Revenue / CVR (conversion rate) / AOV (average order value) / RPS (revenue per session) / ROAS (return on ad spend). These are not independent metrics — they are different cross-sections of the same revenue formula.

- Prioritize by reverse-engineering the formula

Revenue = Sessions × CVR × AOV. Which KPI is the lever depends on the business phase: launch teams need sessions, growth teams need CVR, scale teams need ROAS / RPS, mature teams need AOV. - A team reviewing 5 KPIs weekly outperforms a team reviewing 20. The McNamara fallacy — overvaluing what's measurable, undervaluing what isn't — is the biggest enemy of KPI design.

1. The 5 KPIs every EC operator needs#

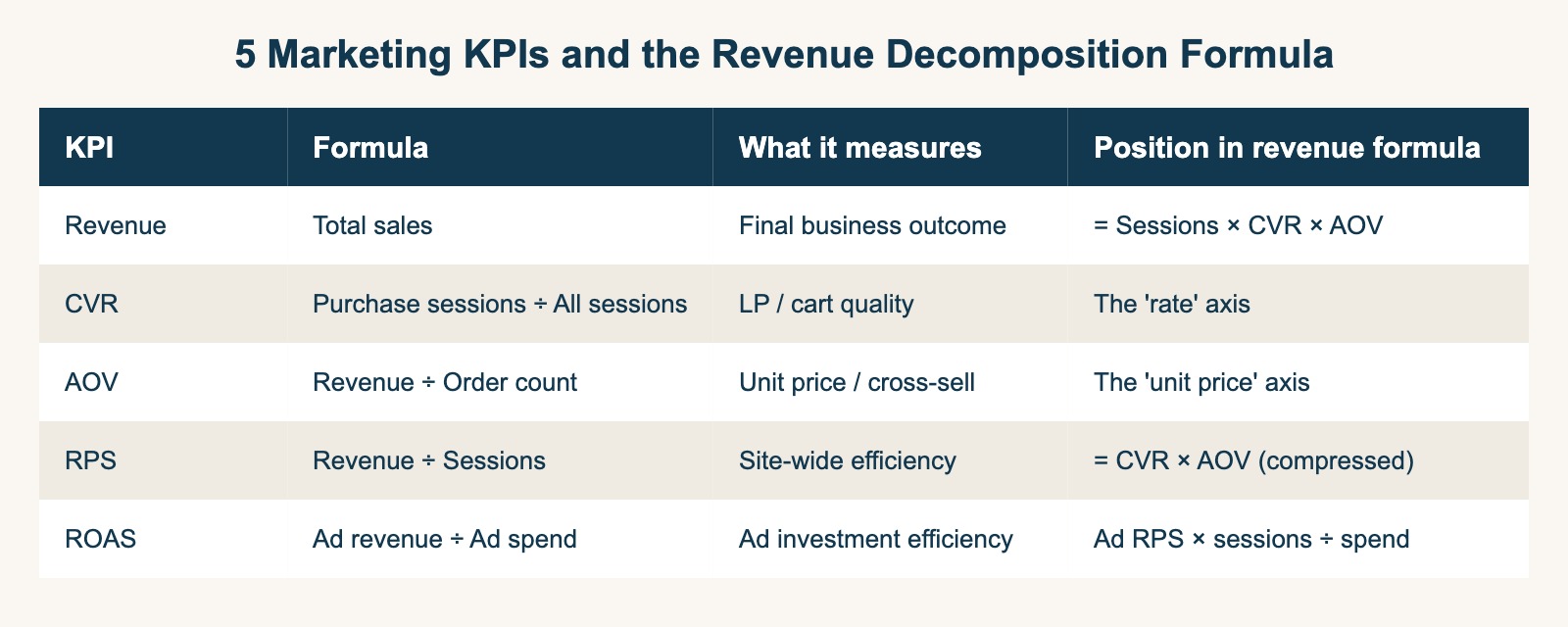

Most "KPIs" you see in marketing dashboards (MAU / PV / SNS followers / open rate / bounce rate) are useful, but only a handful directly drive revenue decisions. The 5 that matter for EC operators are:

| KPI | Definition | What it measures |

|---|---|---|

| Revenue | Total sales over a period | Final business outcome |

| CVR | Purchase sessions ÷ All sessions | LP / cart / product page quality |

| AOV | Revenue ÷ Order count | Unit pricing, cross-sell, discount strategy |

| RPS | Revenue ÷ Sessions = CVR × AOV | Site-wide revenue efficiency, channel comparison |

| ROAS | Ad-attributed revenue ÷ Ad spend | Per-campaign ad investment efficiency |

The thing that makes these 5 special is that they're all cross-sections of the same revenue formula, not independent dimensions. Once you see this, narrowing your dashboard to these 5 stops feeling like a sacrifice.

1.1 The revenue decomposition formula#

The single most important framework in KPI design is this:

Revenue = Sessions × CVR × AOV

Compress CVR × AOV into a per-session number, and you get RPS (Revenue Per Session):

Revenue = Sessions × RPS

ROAS is the same logic, restricted to ad-driven traffic:

ROAS = Ad revenue / Ad spend

= (Ad sessions × Ad-channel RPS) / Ad spend

These 5 KPIs are not independent — they are the same equation projected onto different axes. Adding a 6th, 7th, 8th metric usually means you're tracking a derivative of one of these axes, not a new dimension.

1.2 Why 5 is enough#

The McNamara fallacy — overvaluing what is easily measurable and dismissing what isn't — is the biggest hidden enemy of KPI dashboards[1]. Three reasons 5 is sufficient:

- Coverage: Sessions / CVR / AOV / RPS / ROAS together cover who arrives, who buys, how much they buy, site efficiency, and ad efficiency. Every legitimate question fits.

- Readability: With 5 metrics, you can hold their interactions in your head. With 20, "tracking the correlation between metrics" becomes the job itself.

- Ownership: 5 metrics map cleanly to roles — CVR to LP / cart team, AOV to merchandising, ROAS to paid media, RPS to growth lead. With 20, no single person owns the outcome.

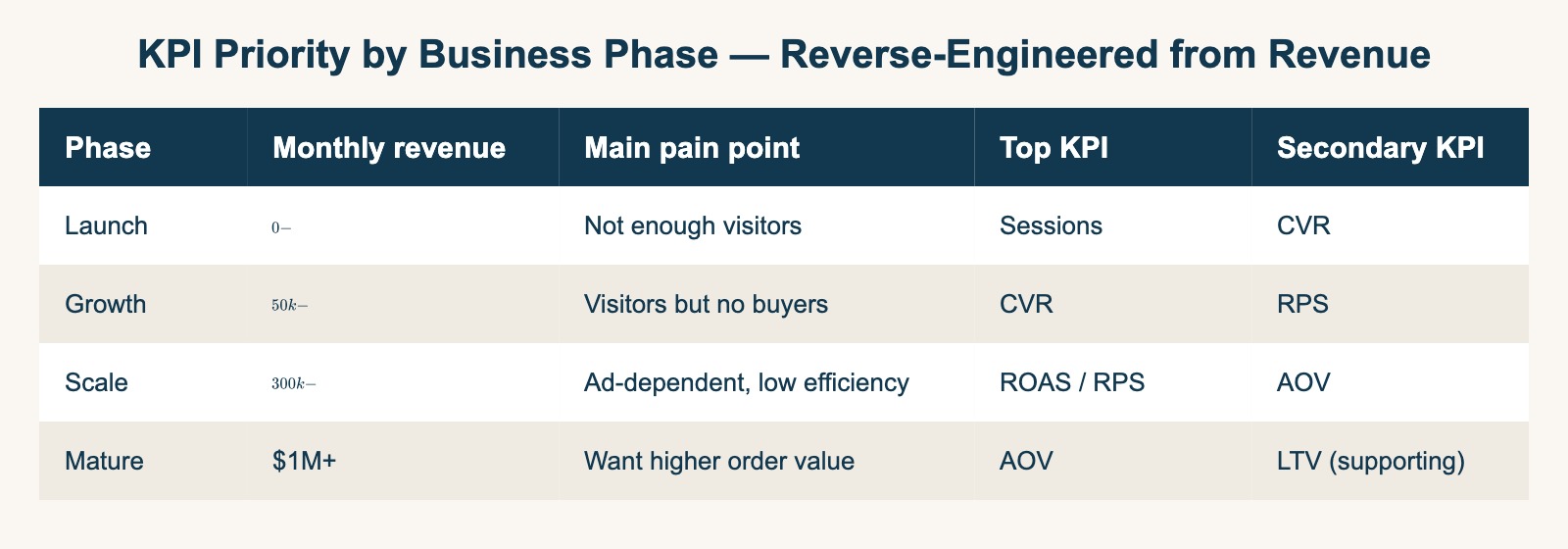

2. Priority by business phase — reverse-engineer from revenue#

Picking the one KPI to push depends on where the business is. The decomposition formula tells you immediately.

| Phase | Monthly revenue | Pain point | Top KPI | Secondary |

|---|---|---|---|---|

| Launch | $0 - $50k | Not enough visitors | Sessions | CVR |

| Growth | $50k - $300k | Visitors but no buyers | CVR | RPS |

| Scale | $300k - $1M | Ad-dependent, low efficiency | ROAS / RPS | AOV |

| Mature | $1M+ | Want higher order value | AOV | LTV (supporting) |

"Everything is important" is not a strategy. Picking the single KPI that has the highest revenue elasticity for the current phase sets the priority for every team meeting that quarter.

2.1 ROAS vs RPS — the most misused pair#

The most common KPI mistake is using ROAS as the only metric for ad budget decisions. ROAS measures campaign-level ad efficiency (denominator: ad spend), while RPS measures site-wide revenue efficiency (denominator: sessions). They answer different questions.

If your high-ROAS Meta campaign brings in visitors with a lower RPS than your organic search visitors, scaling that campaign actually drags down your overall revenue efficiency. ROAS alone hides this. ROAS + RPS together tells you whether you're over-relying on paid traffic — a higher-order judgment call.

For ROAS calculation, industry benchmarks, and improvement tactics, see The Complete Guide to ROAS.

2.2 Leading vs lagging indicators#

Revenue is a lagging indicator — you can't move it directly. CVR / AOV / RPS / Sessions are leading indicators — they're what your team can actually push.

Weekly KPI reviews should open with revenue (the lag) and close with which leading indicator (CVR / AOV / RPS / Sessions / ROAS) is the cause. If your meeting only discusses revenue, you have no path to action.

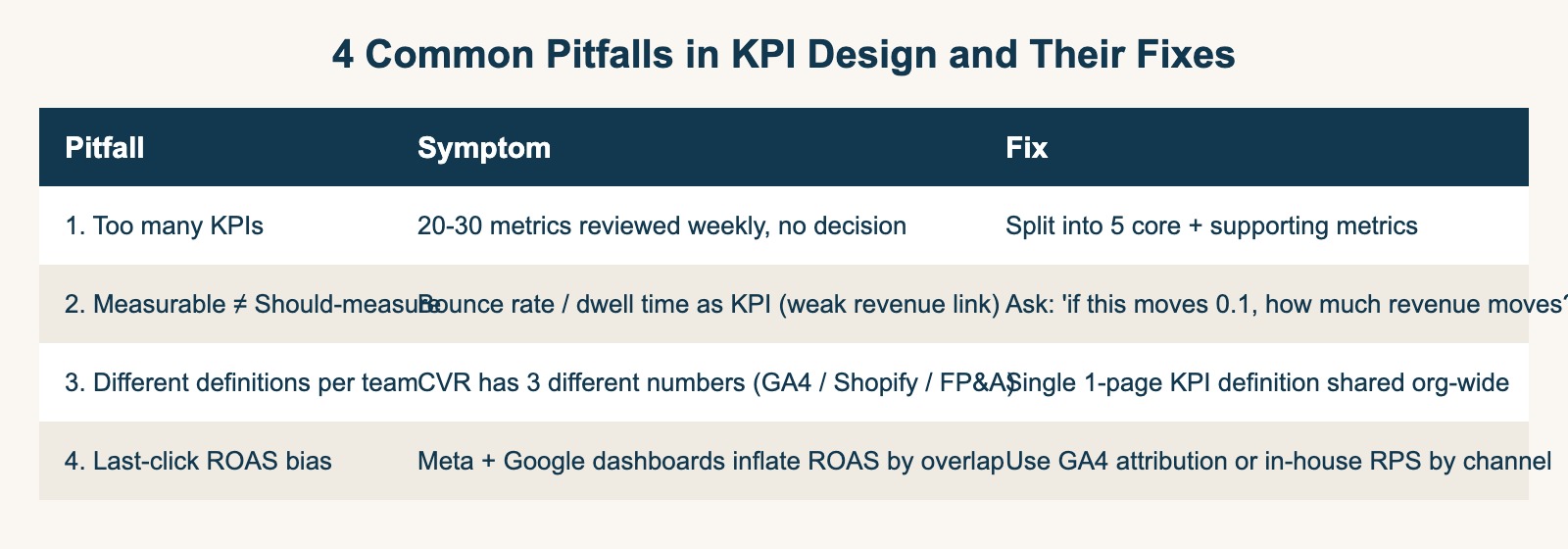

3. Four common pitfalls in KPI design#

3.1 Too many KPIs, no decisions#

The most common failure. Driven by "we should be data-driven," teams pile up 20-30 weekly metrics. The unintended consequence is that the meta-discussion ("which 5 of these 20 actually matter?") consumes the meeting, and the actual decision never happens.

Fix: Pick 5 core KPIs (Revenue / CVR / AOV / RPS / ROAS) for weekly review. Move everything else to a monthly or quarterly "supporting metrics" tier. In the weekly meeting, supporting metrics simply do not appear.

3.2 Measurable ≠ should-measure#

Bounce rate, dwell time, and pages-per-session are easy to pull from GA4 — but their correlation with revenue is weak. Optimizing for them rarely moves the bottom line. Yet they appear on KPI dashboards everywhere because they're easy to extract.

Fix: For every candidate KPI, ask: "If this metric moves by 0.1, how many dollars of revenue follow?" If the answer is hand-wavy, the metric is supporting at best, not core.

3.3 Different definitions across teams#

In one company I've seen "CVR" defined three different ways across three teams: marketing (sessions-based, GA4 default), EC operations (UU-based, Shopify default), and FP&A (monthly-visitors-based, custom). The first 30 minutes of every KPI review went to reconciling which CVR was "real."

Fix: A single 1-page KPI definition document, shared org-wide. Every KPI lists numerator / denominator / aggregation unit / time period. New KPIs cannot be added until they're written into this document.

3.4 Last-click ROAS bias#

Reading ROAS straight from each ad platform's dashboard double-counts overlapping conversions. Meta's dashboard says "I drove that CV." Google's says the same. Both are partially right; summing them overstates the true ROAS by a factor that grows with multi-touch overlap.

Fix: Use GA4's data-driven attribution report or your own session log to calculate ROAS by channel with consistent attribution. See GA4 attribution blind spots and The last-click trap for the longer story.

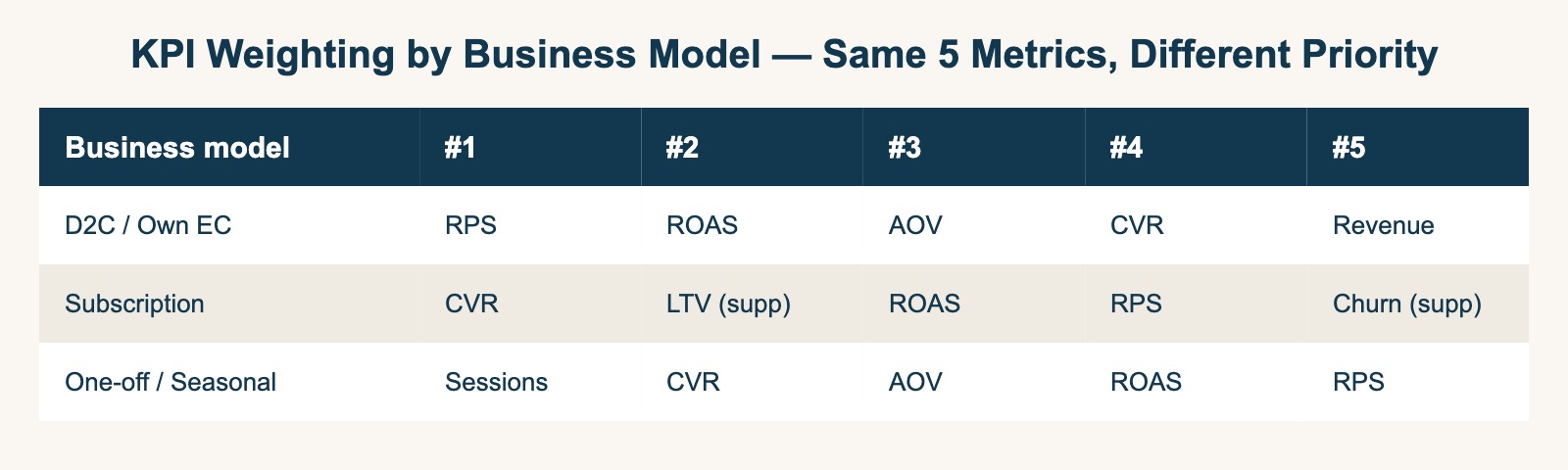

4. KPI weighting by business model#

The 5 KPIs are universal; the weights are not.

| Business model | #1 | #2 | #3 | #4 | #5 |

|---|---|---|---|---|---|

| D2C / Own EC | RPS | ROAS | AOV | CVR | Revenue |

| Subscription | CVR | LTV (supp) | ROAS | RPS | Churn (supp) |

| One-off / Seasonal | Sessions | CVR | AOV | ROAS | RPS |

The 5 KPIs themselves don't change. Only the weighting changes — which keeps the framework portable across business models without becoming a different framework every time.

5. Quarterly review cadence#

KPIs are not a one-time setup. They need a 3-month cadence to stay aligned with the actual phase of the business. At each quarterly review, ask three questions of every KPI:

- Did this KPI move from any of the team's actions in the past 3 months? If not, the team's work isn't connected to it — or the definition isn't connected to action.

- If this KPI moves by 0.1, how much revenue moves? If the answer is unclear, demote it to a supporting metric.

- Is the owner clear? If not, reassign or drop the KPI.

KPIs that survive all three questions are kept. The rest go to supporting tier or get retired.

Improvement KPIs vs health KPIs#

Once you've trimmed the list, split it again into two:

- Improvement KPIs: the 2-3 metrics you actively want to move this quarter

- Health KPIs: the 2-3 metrics that should hold a baseline (alerted only when out of range)

Weekly meetings discuss improvement KPIs only. Health KPIs surface only when they breach a threshold. This split alone tends to halve meeting time.

6. Where RevenueScope fits#

The 5 KPIs above all derive from a single decomposition: Sessions × CVR × AOV = Revenue. The hard part is not the framework — it's slicing this decomposition by channel and campaign in a single view.

GA4 is excellent at traffic measurement, but its default dashboards lean toward behavior metrics (sessions, PV, bounce rate). Revenue-first metrics (RPS by channel, ROAS by campaign, CVR by LP) require non-trivial setup. Ad platforms give you ROAS for their own channel — never the cross-channel RPS comparison.

We're building RevenueScope precisely for this: a thin analytics layer that sits alongside GA4 and reads from your existing dataLayer, then surfaces channel-level RPS, site-wide CVR, and AOV in a single dashboard. If you already have GA4 ecommerce events configured, RevenueScope needs almost no additional setup.

For ROAS, you'll continue reading it from your Meta / Google ad dashboards. The point is to put RevenueScope's RPS next to those ROAS numbers: a high-ROAS campaign whose visitors have a lower RPS than your organic search traffic is actually pulling your site-wide revenue efficiency down. The dashboard ROAS alone hides this — ROAS + cross-channel RPS together is what makes the 5-KPI framework actionable.

The longer-term goal is to shift teams from "we review 20 KPIs weekly" to "we review 5 KPIs weekly and actually decide." UTM hygiene matters too — if your UTMs are broken, RPS and ROAS by channel are broken with them. See UTM parameters: 4 patterns breaking GA4 channel grouping for the foundation.

References#

[1] Yankelovich, Daniel. "Corporate Priorities: A Continuing Study of the New Demands on Business." 1972 (origin of the McNamara fallacy)

[2] Google Analytics. "\[GA4\] Default channel group" 2024

[3] Google Analytics. "\[GA4\] Conversions" 2024

[4] Shopify Plus. "BFCM 2024 Recap" December 2024

[5] HubSpot Research. "State of Marketing Report 2025" 2025

[6] Forrester Research. "Marketing Measurement and Optimization Solutions" 2024

[7] Harvard Business Review. "The CMO's Guide to Marketing Metrics" 2023

See which ads actually drive revenue, at a glance

14-day free trial. No credit card required. Up and running in 5 minutes.

Start 14-day free trial